I’ve been building a small roguelite autobattler for the Three.js Journey challenge and community. The core loop is pretty simple and familar if you play these kinds of games. You create a conductor, send a train across an overworld map, pick routes, collect resources, add train cars, and resolve battles through autobattle systems.

One feature I wanted to experiment with was local AI generation in the browser, not as the core mechanic, but as a flavor layer. That became the Tome of Wonder.

The Tome of Wonder is an opt-in feature that uses Transformers.js to generate conductor backstories and personalize overworld events based on that character. During conductor creation, the player can click a dice icon to download the Tome locally. Once it is ready, the game can generate a short backstory for that conductor. Later, when the player hits event nodes on the overworld map, the Tome can use that conductor’s race, description, tags, and backstory to rewrite the event text.

The first version used a smaller Gemma model while I proved out the browser flow. The Tome now uses Gemma 4 E2B, with the ONNX model published for Transformers.js:

onnx-community/gemma-4-E2B-it-ONNX

This model takes longer to download than the gemma3 model I used at first, but the tradeoff is worthwhile for richer backstory generation. Since the Tome is optional and cached in the browser after download, the player can choose when to pay that first-use cost.

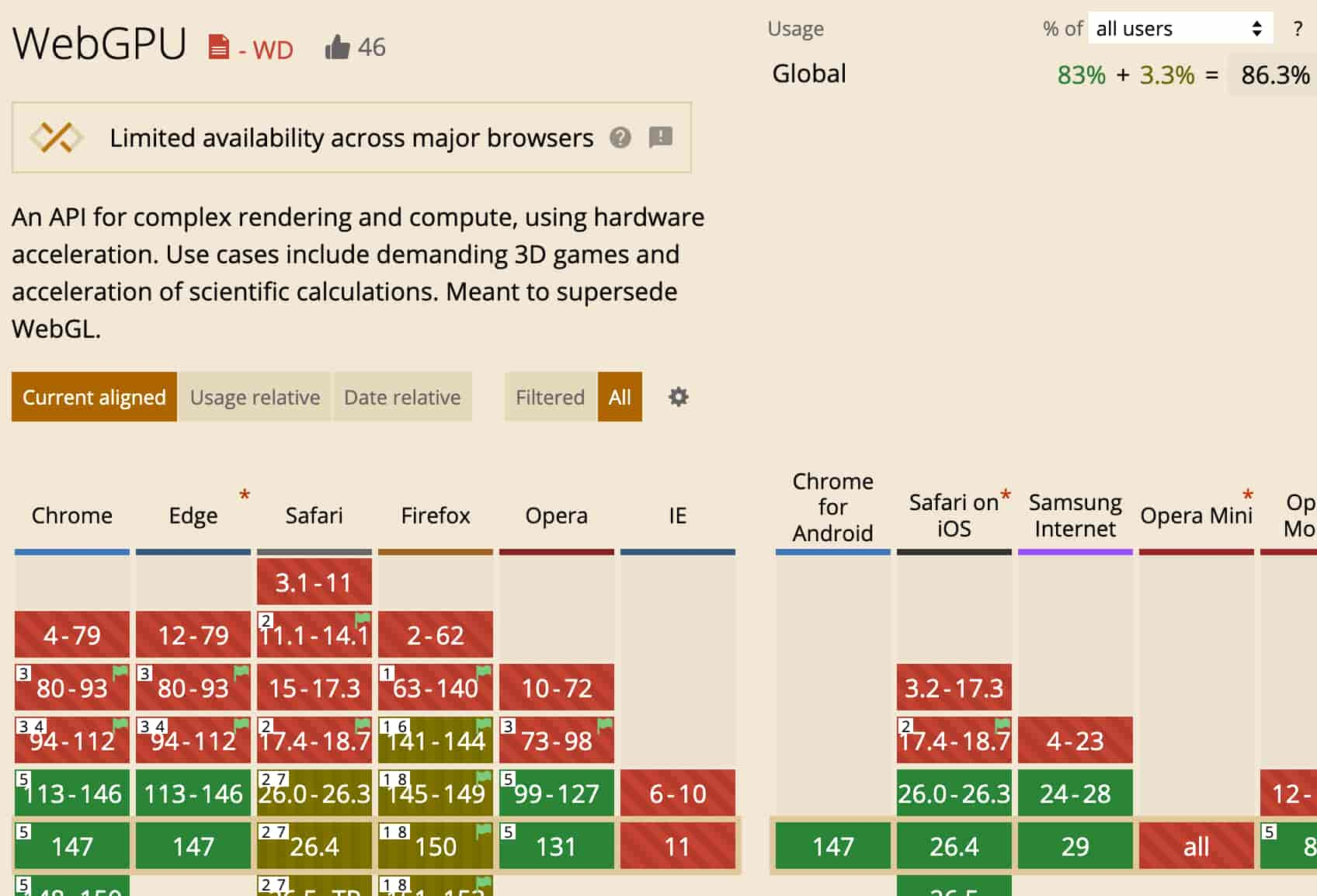

The current implementation uses @huggingface/transformers in the browser with WebGPU and q4 quantization, which means using a compressed 4-bit version of the model so it takes less memory and downloads faster in the browser. The goal is not to have the model own the game design. Rewards, choice IDs, and balance all stay authored and deterministic. The model only gets to personalize the narrative wrapper: event titles, summaries, choice labels, and outcome text. That design choice matters a lot for games. If the LLM fails, returns weird JSON, or the browser does not support the feature, the authored event still works. The player can keep playing. No server call, no account, no hard dependency.

A few implementation details made the feature feel better:

- The model download is opt-in. Nothing heavy happens unless the player clicks.

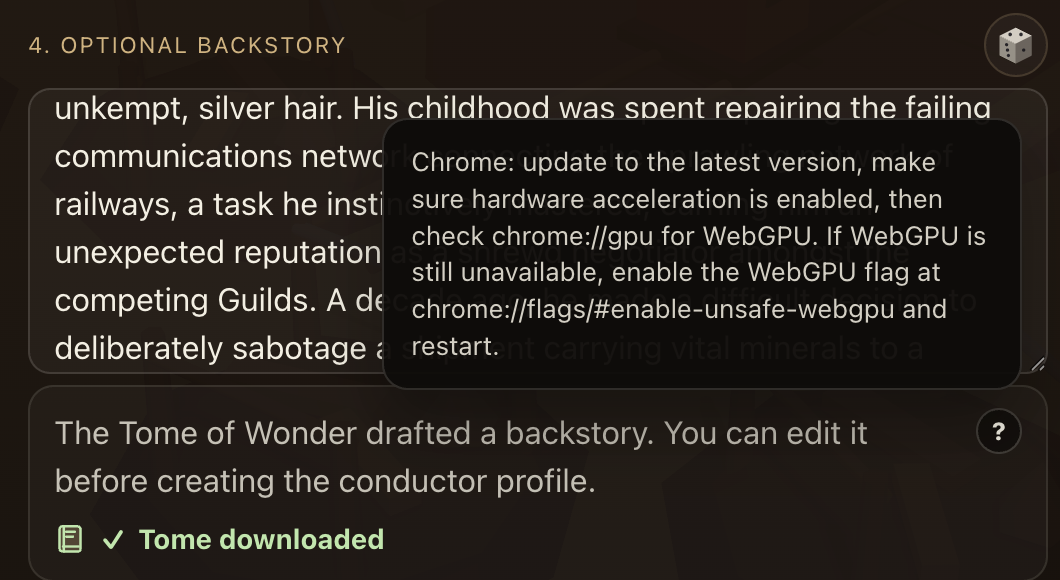

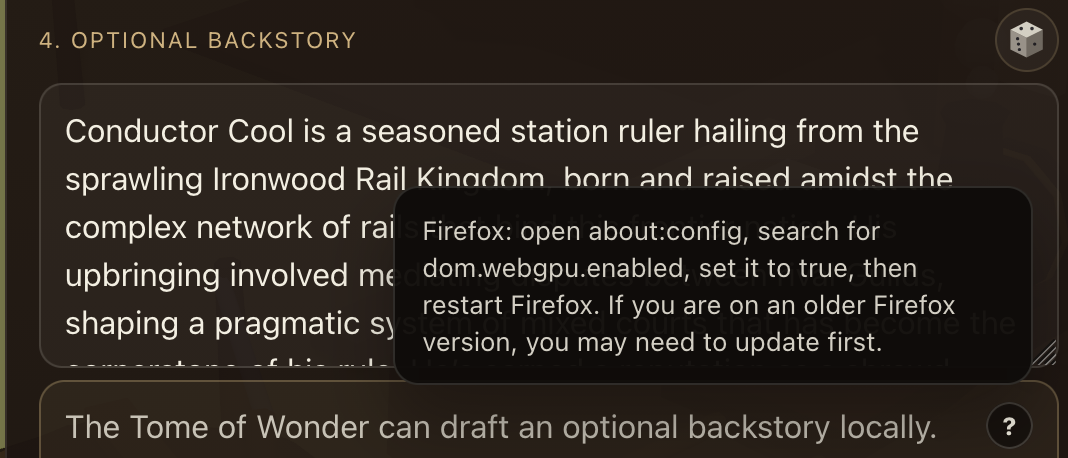

- The UI shows download progress and a “Tome downloaded” state.

- If WebGPU is missing, the game shows browser-specific tooltip guidance for enabling it.

- Generated text is sanitized into plain text so we hopefully avoid any weird formatting/injection.

- Event generation only runs after the Tome is already downloaded, so events do not surprise-trigger a model download.

- Browser fallback is always authored content.

WebGPU support is getting much better, but it still varies enough that you should check compatibility and design graceful fallbacks. The game runs a browser check first and, when support is available but not enabled, shows browser-specific tooltip guidance for turning it on.

The thing I like about this pattern is that it treats local AI like a game feel layer. It does not replace handcrafted systems. It adds a little personality, lets the run feel more specific to the character, and gives web game devs a practical way to experiment with Transformers.js without betting the whole game on generation working perfectly on every device.

I hope to see more web games experiment with Transformers.js. If this inspires you to build something, please share it. I’d love to see it.

Want to try out my game and the Tome of Wonder? You can play it here: https://learning-train.vercel.app/.

Keep in mind, this is just a hackathon project and full of bugs and unbalanced systems, so be prepared, brave conductors 🤓!