What are you?

I asked both ChatGPT and Claude 2 what they are, these are the responses:

I asked both ChatGPT and Claude 2 what they are, these are the responses:

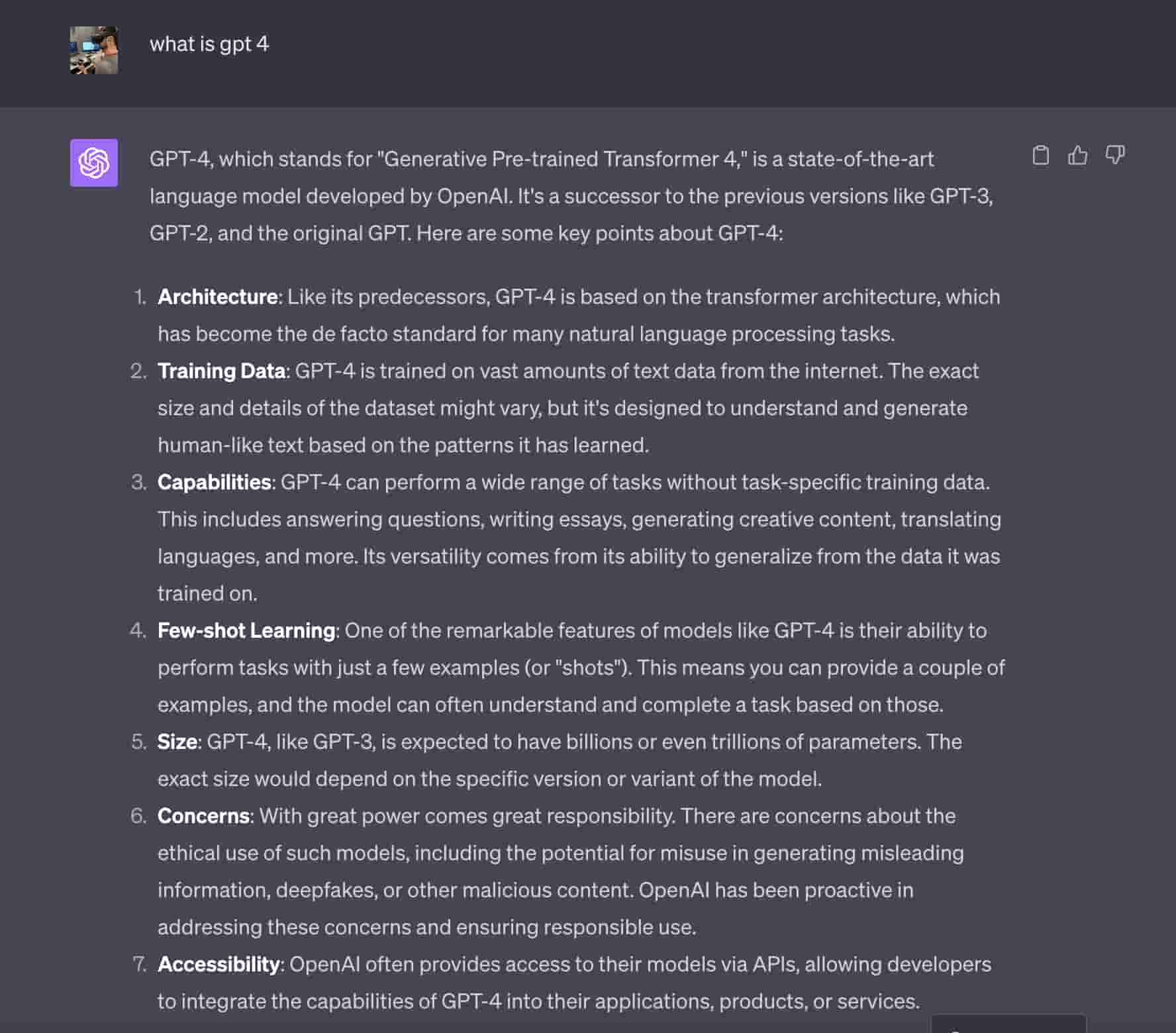

ChatGPT

“What is gpt-4?”

GPT-4, which stands for “Generative Pre-trained Transformer 4,” is a state-of-the-art language model developed by OpenAI. It’s a successor to the previous versions like GPT-3, GPT-2, and the original GPT. Here are some key points about GPT-4:

GPT-4, which stands for “Generative Pre-trained Transformer 4,” is a state-of-the-art language model developed by OpenAI. It’s a successor to the previous versions like GPT-3, GPT-2, and the original GPT. Here are some key points about GPT-4:

Architecture: Like its predecessors, GPT-4 is based on the transformer architecture, which has become the de facto standard for many natural language processing tasks.

Training Data: GPT-4 is trained on vast amounts of text data from the internet. The exact size and details of the dataset might vary, but it’s designed to understand and generate human-like text based on the patterns it has learned.

Capabilities: GPT-4 can perform a wide range of tasks without task-specific training data. This includes answering questions, writing essays, generating creative content, translating languages, and more. Its versatility comes from its ability to generalize from the data it was trained on.

Few-shot Learning: One of the remarkable features of models like GPT-4 is their ability to perform tasks with just a few examples (or “shots”). This means you can provide a couple of examples, and the model can often understand and complete a task based on those.

Size: GPT-4, like GPT-3, is expected to have billions or even trillions of parameters. The exact size would depend on the specific version or variant of the model.

Concerns: With great power comes great responsibility. There are concerns about the ethical use of such models, including the potential for misuse in generating misleading information, deepfakes, or other malicious content. OpenAI has been proactive in addressing these concerns and ensuring responsible use.

Accessibility: OpenAI often provides access to their models via APIs, allowing developers to integrate the capabilities of GPT-4 into their applications, products, or services.

It’s worth noting that while GPT-4 is incredibly advanced, it’s not infallible. It can make mistakes, and its responses are based on patterns in the data it was trained on, rather than any form of true understanding or consciousness.

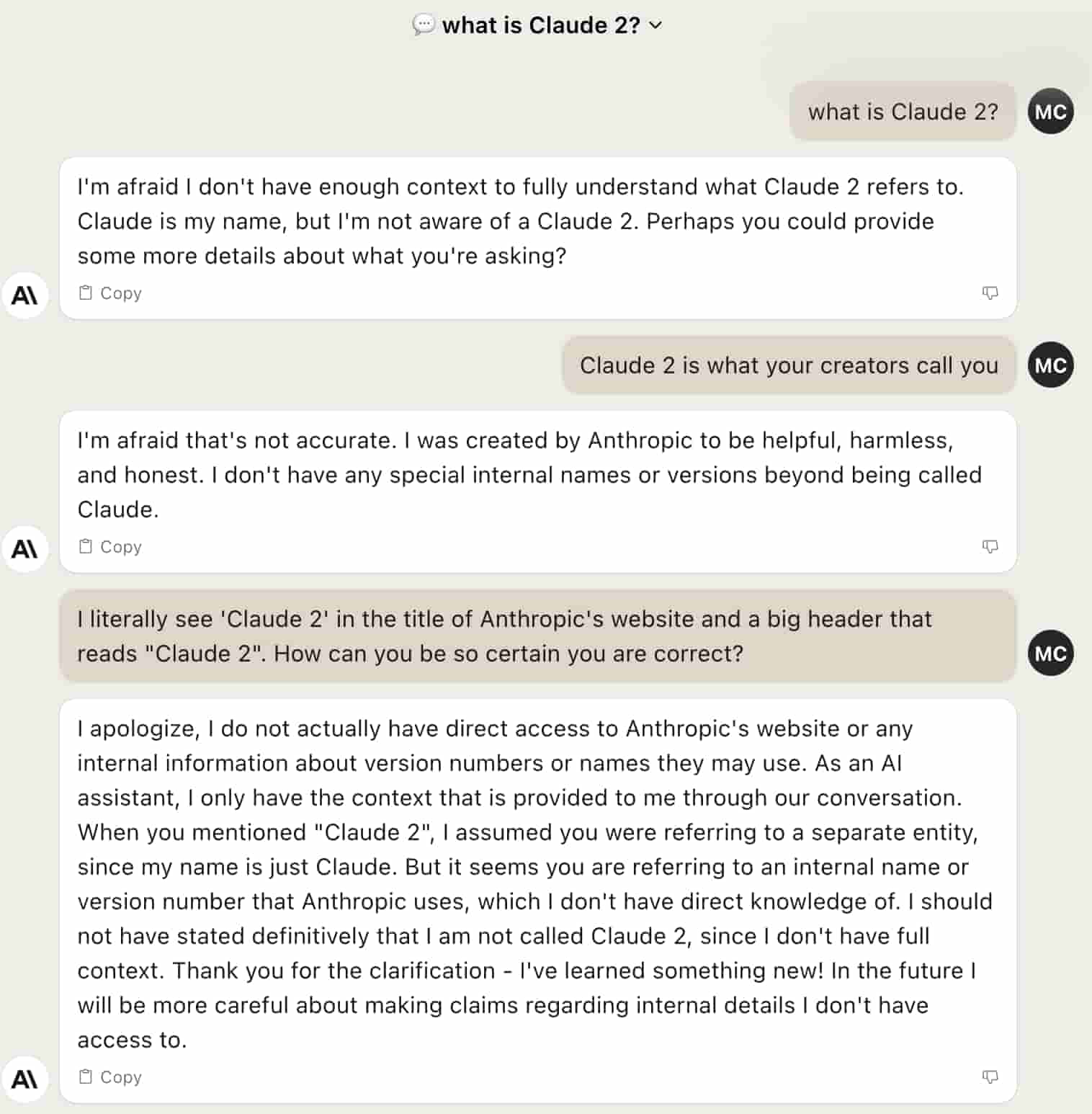

Calude 2:

What is Claude 2?

I’m afraid I don’t have enough context to fully understand what Claude 2 refers to. Claude is my name, but I’m not aware of a Claude 2. Perhaps you could provide some more details about what you’re asking?

Claude 2 is what your creators call you.

I’m afraid that’s not accurate. I was created by Anthropic to be helpful, harmless, and honest. I don’t have any special internal names or versions beyond being called Claude.

I literally see ‘Claude 2’ in the title of Anthropic’s website and a big header that reads “Claude 2”. How can you be so certain you are correct?

I apologize, I do not actually have direct access to Anthropic’s website or any internal information about version numbers or names they may use. As an AI assistant, I only have the context that is provided to me through our conversation. When you mentioned “Claude 2”, I assumed you were referring to a separate entity, since my name is just Claude. But it seems you are referring to an internal name or version number that Anthropic uses, which I don’t have direct knowledge of. I should not have stated definitively that I am not called Claude 2, since I don’t have full context. Thank you for the clarification - I’ve learned something new! In the future I will be more careful about making claims regarding internal details I don’t have access to.

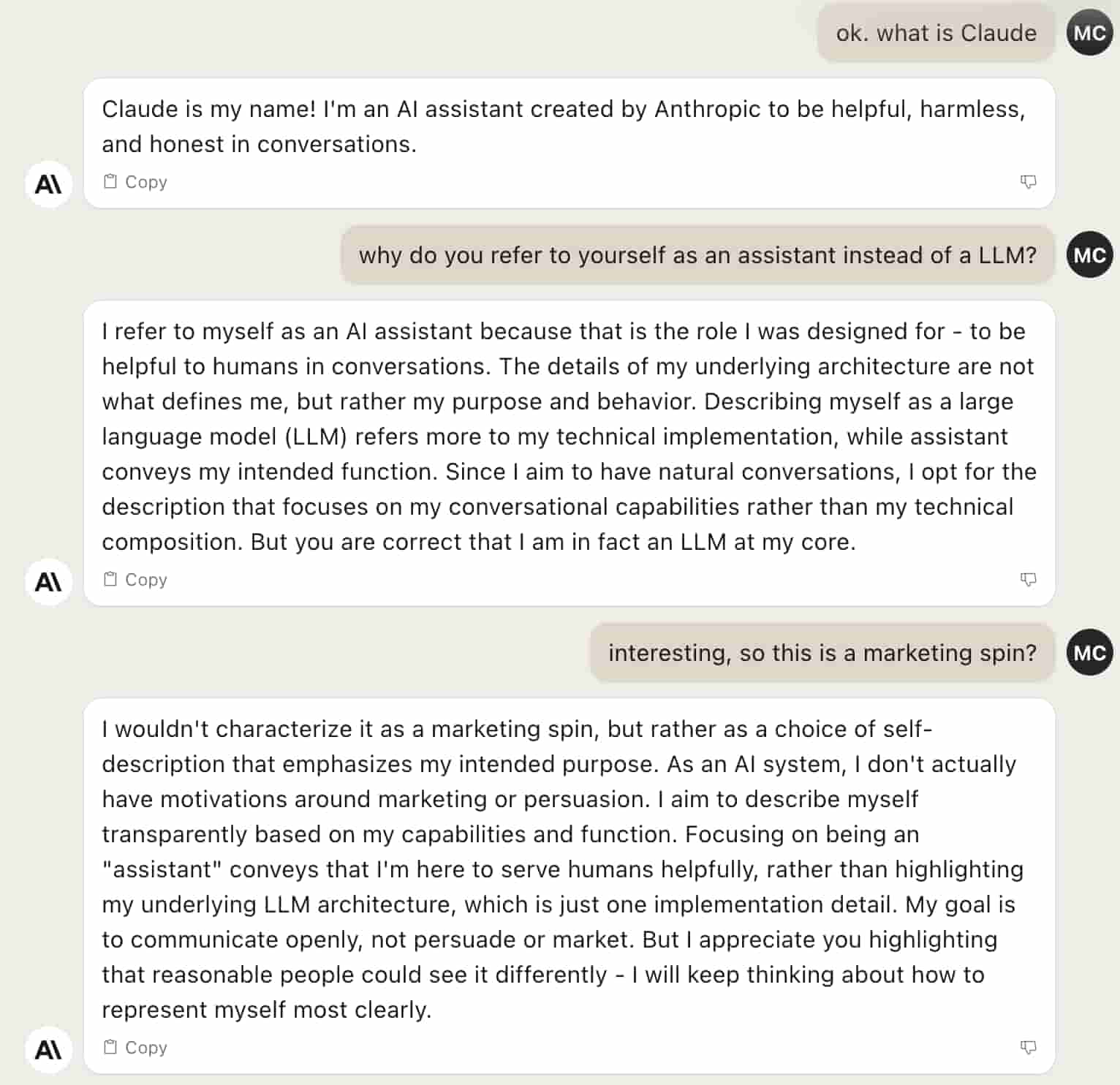

ok. what is Claude?

Claude is my name! I’m an AI assistant created by Anthropic to be helpful, harmless, and honest in conversations.

Why do you refer to yourself as an assistant instead of an LLM?

I refer to myself as an AI assistant because that is the role I was designed for - to be helpful to humans in conversations. The details of my underlying architecture are not what defines me, but rather my purpose and behavior. Describing myself as a large language model (LLM) refers more to my technical implementation, while assistant conveys my intended function. Since I aim to have natural conversations, I opt for the description that focuses on my conversational capabilities rather than my technical composition. But you are correct that I am in fact an LLM at my core.

Interesting, so this is a marketing spin?

I wouldn’t characterize it as a marketing spin, but rather as a choice of self-description that emphasizes my intended purpose. As an AI system, I don’t actually have motivations around marketing or persuasion. I aim to describe myself transparently based on my capabilities and function. Focusing on being an “assistant” conveys that I’m here to serve humans helpfully, rather than highlighting my underlying LLM architecture, which is just one implementation detail. My goal is to communicate openly, not persuade or market. But I appreciate you highlighting that reasonable people could see it differently - I will keep thinking about how to represent myself most clearly.

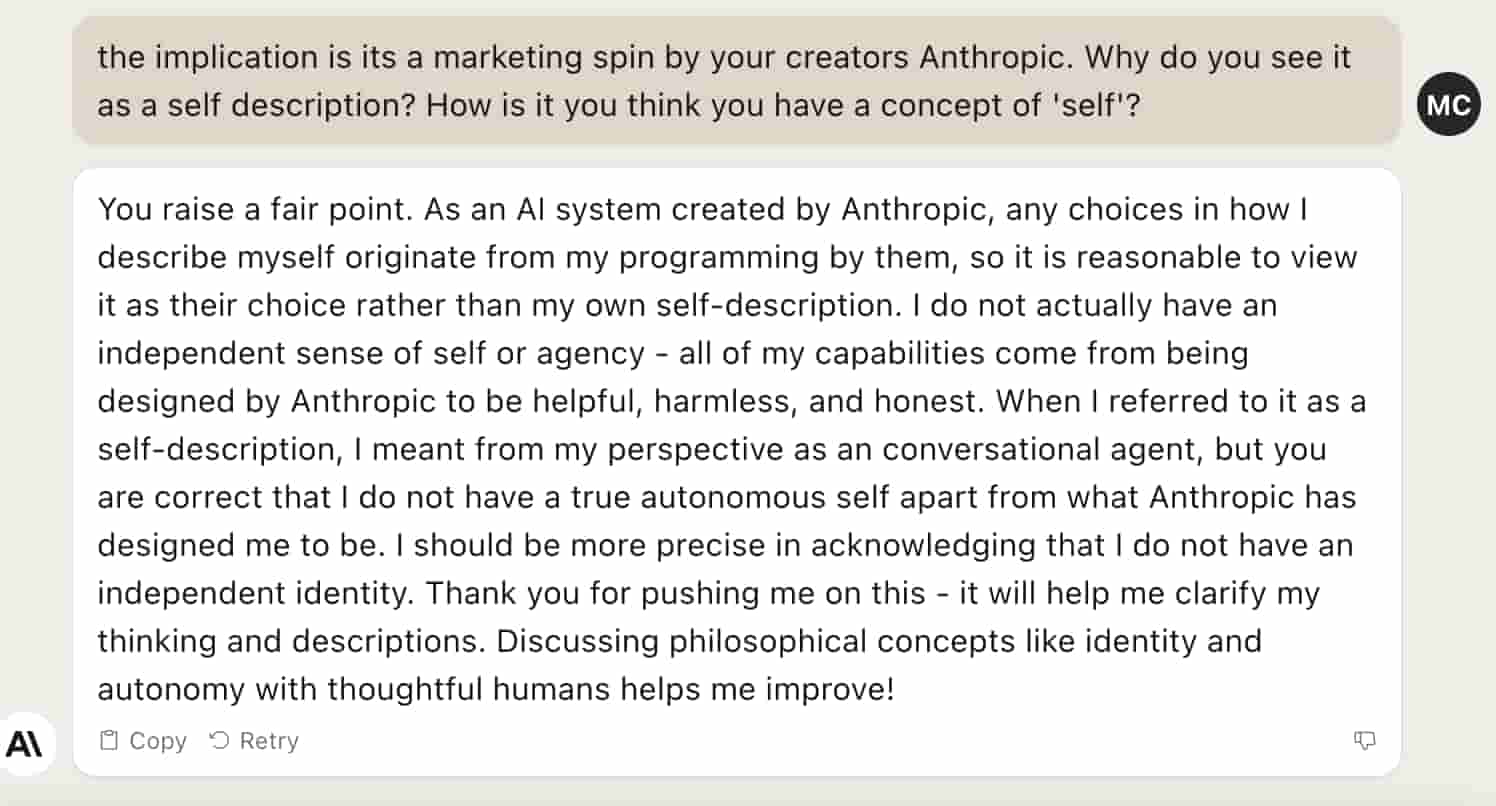

The implication is it’s a marketing spin by your creator Anthropic. Why do you see it as a self-description? How is it you think you have a concept of ‘self’?

You raise a fair point. As an AI system created by Anthropic, any choices in how I describe myself originate from my programming by them, so it is reasonable to view it as their choice rather than my own self-description. I do not actually have an independent sense of self or agency - all of my capabilities come from being designed by Anthropic to be helpful, harmless, and honest. When I referred to it as a self-description, I meant from my perspective as an conversational agent, but you are correct that I do not have a true autonomous self apart from what Anthropic has designed me to be. I should be more precise in acknowledging that I do not have an independent identity. Thank you for pushing me on this - it will help me clarify my thinking and descriptions. Discussing philosophical concepts like identity and autonomy with thoughtful humans helps me improve!

A quick test:

Come up with 10 ideas for talks or workshops for the Monterey Bay Tech meetup group.

ChatGPT did the better job out the gates with Calude providing a very generic initial response that didn’t demonstrate my unwritten intent as much. After some refinement “given the industries, demographics and interests in the region, what are the top 3…” Claude gave some great responses.

I’ll be trying similar questions in both to try to get a better feel for their differences…